In my first pitch, I introduced MotionX as a general purpose movement analysis tool, and the idea that anyone with a phone camera should be able to get expert-level biomechanical feedback without six-figure motion capture equipment. That core vision hasn’t changed, but my focus has sharpened.

I’m a rower. Rowing is a sport where millimeters and milliseconds matter, and would be a sport that needs this tool more urgently. A rowing coach stands on the shore watching four or eight athletes cycle through strokes at 30+ per minute. By the time practice is over, most of what happened is gone from memory. Coaches film practice sessions. After practice, they review video by pausing, and rewinding at body positions, trying to turn a blurry mental picture into concrete feedback. It takes hours and the results are still subjective.

Right now, the feedback loop between “what happened on the water” and “what to fix” is almost entirely manual. A coach might pause a video, eyeball the catch angle, and say “your knees look too compressed.” But is it 5 degrees off or 20? Is it getting worse over the session? Is one athlete dragging the crew’s timing off?

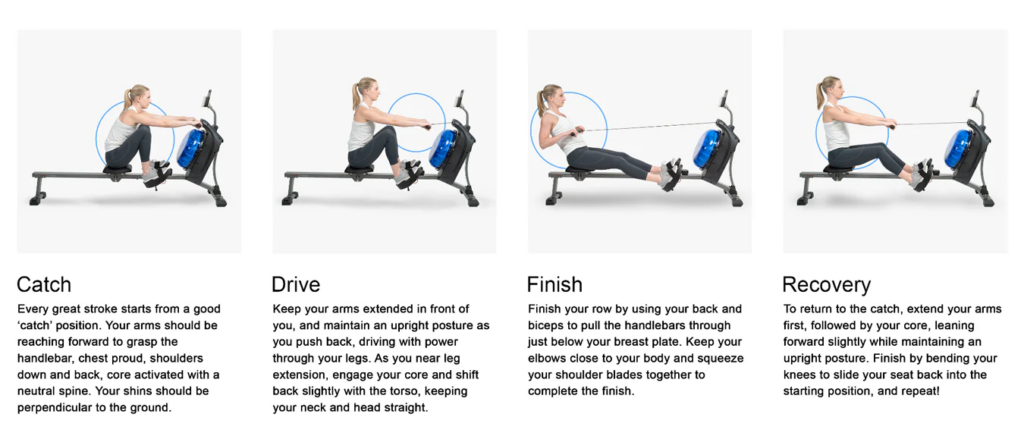

Even that 5 degrees at the catch determines whether you’re compressing efficiently or loading your lower back. Multiply that across 200 strokes, across an entire crew, and small errors compound into injury risk and lost speed that nobody can see in real time.

Updated definition statement: Build a web-based tool that takes a single rowing video as input, automatically identifies each athlete, tracks their body positions frame-by-frame, detects the key phases of each stroke (catch, drive, finish, recovery), scores their technique against reference standards, and presents the results in a way that a coach can immediately act on. This is done all without requiring any special hardware beyond a phone camera.

Image showing all the body positions in a rowing stoke

The underlying pose estimation and scoring algorithms are sport-agnostic, meaning the same platform can expand to analyze basketball shooting form, physical therapy exercises, weightlifting technique, or any repetitive human movement with the right reference recordings. Therefore the project expands as it is not limited to one single sport. Based on my current prototype, I would say that it is more polished for sports like rowing. But it can definitely be applied for other sports after a bit of refinement.

The primary users are rowing coaches and athletes at the club and collegiate level. The secondary audience is anyone involved in repetitive full-body motion who could benefit from automated technique analysis (physical therapists, CrossFit coaches, etc.).

Here are a few technologies and background research conducted to help build and support for the foundation of the project.

MediaPipe -> Yolov8-Pose

Worth to mention, I have shifted one of the core technologies from MediaPipe to YoloV8. These libraries are used for pose estimation and identifying landmarks on the body. Previously, I have worked with Google’s MediaPipe, which tracks 33 keypoints on a single person. However, rowing is a team sport, and a typical training video has 4 athletes in frame. I have explored possible solutions by first using a library that identifies all humans in a video and crops them then sending it to MediaPipe to analyze them each individually. However, that might demand more memory and take longer to analyze. After evaluating alternatives, I switched to YOLOv8-pose, which natively supports multi-person detection with 17 keypoints. Those 17 points (shoulders, elbows, wrists, hips, knees, ankles) capture exactly what matters for rowing biomechanics, without the noise of MediaPipe’s 33 points (which include individual fingers and facial landmarks that are irrelevant here).

The market need is real. Over 15 million physically active Canadians, a 287 billion global rehabilitation market, and a complete absence of accessible multi−person movement analysis tools. Professional systems(Vicon,Qualisys)cost 150,000+. Consumer apps count reps. Nothing in between does what this project does.

In terms of deliverables, I am building a web based application with a fully functional backend and frontend.

Backend (Python/FastAPI):

- Video upload: YOLOv8-pose processes every frame, detecting all athletes and extracting 17 keypoint positions per person per frame

- 8 joint angles calculated per athlete (left/right shoulders, elbows, hips, knees) using arctan2 on 2D coordinates

- Stroke phases detected per athlete using knee angle signal analysis

- Technique scored via DTW comparison against reference standards

- Annotated video generated with per-athlete color-coded skeleton overlays

- AI coaching reports via an AI API, fed with actual biomechanical data

- All heavy processing runs in background workers (Celery + Redis) so the user isn’t waiting on a frozen screen

Frontend (React/TypeScript):

- Upload page with configuration (crew type, model quality, frame skip rate)

- Real-time progress bar while analysis runs

- Results dashboard with: video player (toggle between original and skeleton overlay), per-rower score breakdowns, radar charts, stroke rate timelines, angle statistics, and a Key Frame Viewer that shows the exact catch and finish positions for every stroke

- AI coaching page with report generation and interactive chat

- Standards library for uploading reference videos to score against

How I’ll test it against the definition statement:

The core question is whether a coach can watch a practice, upload the video, and get actionable feedback faster than they could by manually reviewing the footage. I plan to test this with actual rowing coaches: have them review the same video manually, then compare their notes to the system’s output. If the system identifies the same issues (and finds additional ones the coach missed), the definition is met.

Progress So Far

According to my timeline, I’m at the end of Research & Planning and transitioning into active development. Here’s what’s actually built and working:

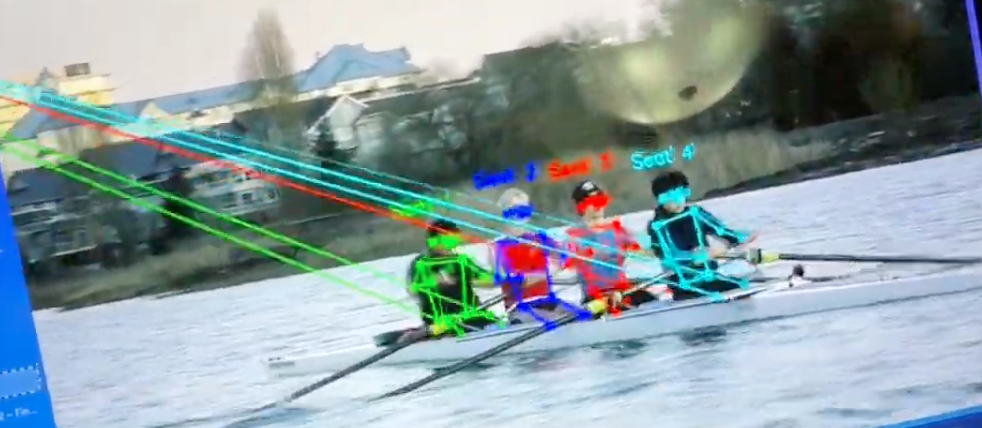

Sorry for the crappy screenshot, my laptop screen broke when writing this blog, I wasn’t able to have direct access to my code

Complete and functional:

- Full video upload, multi-person pose analysis, results pipeline, tested with real 4-rower crew footage

- Stroke phase detection producing correct stroke counts validated against manual review

- DTW technique scoring and per-joint angle bracket scoring

- Annotated video generation with color-coded skeleton overlays per athlete

- Docker configuration for easy deployment

https://drive.google.com/file/d/1IHZYBFvpiexR87abAfk5bKIj4vBXu6M9/view?usp=sharing

This is an interesting demo video of the prototype. Unfortunately, the sound wasn’t working. I was showcasing the backend of the current prototype, which is running in my terminal with a docker environment set up. The backend is mainly in charge of processing requests to access database and to process the analysis pipeline in the background. I have uploaded a video of a crew rowing, and it is able to successfully track the four people moving in the boat. The page currently also provides a general analysis of the body movement for each individual athlete.

In the development process, there are definitely several challenges that I will face.

- In certain frames, the program looses track of the people and couldn’t recognize them. Most of the time, this is due to the clarity of the video or the video being shot too far away. Other factors like lighting definitely also plays a role in causing this issue.

- Performance wise, the analysis process is currently ran on my laptop locally. The process is taking a bit longer than I expected (2-3 minutes). I have asked an AI, and if I deploy the backend onto a sever it should speed up the processing speed to under a minute.

- I am planning on adding an AI feature. And I would have to find a way to send all the motion tracking data to the AI, whether that’s through forming all the data in JSON form or giving the AI specific instructions to process this information.

In the upcoming weeks, I see myself working through the online motion analysis phase as this is my #1 priority. By making sure all the core functions work: making sure the accuracy of the analysis, specifically for rowing videos. Also asking coaches what they would be hoping to get from the analysis. After that, I would be able to then move on to polishing the entire system. Adding user system, AI integration, and polishing the UI/UX/ overall experience.

I am happy with my progress so far and is excited to continue working on this project after the break. Hoping to deliver it to a wider audience in the near future.

Have a great spring break!

Leave a Reply